Last year I created a demo showing how CSS 3D transforms could be used to create 3D environments. The demo was a technical showcase of what could be achieved with CSS at the time but I wanted to see how far I could push things, so over the past few months I’ve been working on a new version with more complex models, realistic lighting, shadows and collision detection. This post documents how I did it and the techniques I used.

Creating 3D objects

The geometry of a 3D object is stored as a collection of points (or vertices), each having an x, y and z property that defines its position in 3D space. A rectangle for example would be defined by four vertices, one for each corner. Each corner can be individually manipulated allowing the rectangle to be pulled into different shapes by moving its vertices along the x, y and z axis. The 3D engine will use this vertex data and some math to render 3D objects on your 2D screen.

With CSS transforms this is turned on its head. We can’t define arbitrary shapes using a set of points. Instead, we have to work with HTML elements, which are always rectangular and have two dimensional properties such as top, left, width and height to determine their position and size. In many ways this makes dealing with 3D easier as there’s no complex math to deal with — just apply a CSS transform to rotate an element around an axis and you’re done!

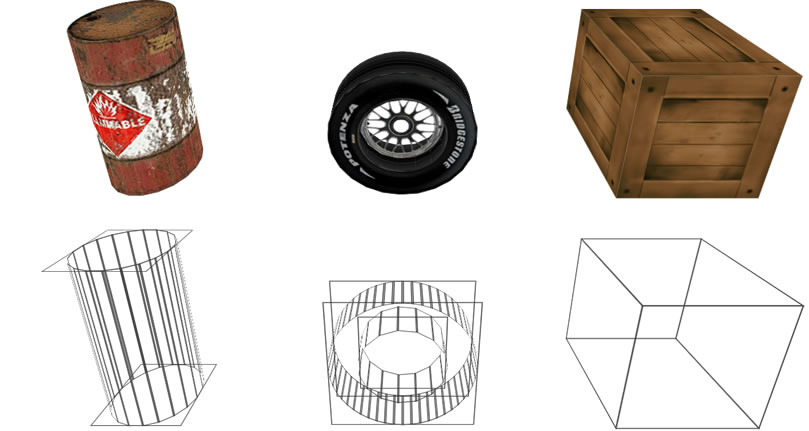

Creating objects from rectangles seems limiting at first but you can do a surprising amount with them, especially when you start playing with PNG alpha channels. In the image below you can see the barrel top and wheel objects appear rounded despite being made up of rectangles.

All objects are created in JavaScript using a small set functions for creating primitive geometry. The simplest object that can be created is a plane, which is basically a <div> element. Planes can be added to assemblies, (a wrapper <div> element) allowing the entire object to be rotated and moved as a single entity. A tube is an assembly containing planes rotated around an axis and a barrel is a tube with a top plane and another for the bottom.

The following example shows this in practice – have a look at the “JS” tab:

Lighting

Lighting was by the biggest challenge in this project. I won’t lie, the math nearly broke me, but it was worth the effort because lighting brings an incredible sense of depth and atmosphere an otherwise flat and lifeless environment.

As I mentioned earlier, an object in your average 3D engine is defined by a series of vertices. To calculate lighting these vertices are used to compute a “normal” which can be used to determine how much light will hit the centre point of a surface. This poses a problem when creating 3D objects with HTML elements because this vertex data doesn’t exist. So the first challenge was to write a set of functions to calculate the four vertices (one for each corner) for an element that had been transformed with CSS so that lighting could be calculated. Once that was figured out I began to experiment with different ways to light objects. In my first experiment, I used multiple background-images to simulate light hitting a surface by combining a linear-gradient with an image. The effect uses a gradient that begins and ends with the same rgba value, producing a solid block of colour. Varying the value of the alpha channel allows the underlying image to bleed through the colour block creating the illusion of shading.

To achieve the second darkest effect in the above image I apply the following styles to an element:

element {

background: linear-gradient(rgba(0,0,0,.8), rgba(0,0,0,.8)), url("texture.png");

}In practice, these styles are not predefined in a stylesheet, they are calculated dynamically and applied directly to the elements style property using JavaScript.

This technique is referred to as flat shading. It’s an effective method of shading, however it does result in the entire surface having the same detail. For example, if I created a 3D wall that extended into the distance, it would be shaded identically along its entire length. I wanted something that looked more realistic.

A second stab at lighting

To simulate real lighting, surfaces need to darken as they extend beyond the range of a light source, and if multiple lights hit the same surface it should shade accordingly.

To flat shade a surface I only had to calculate the light hitting the centre point, but now I need to sample the light at various points on the surface so I can determine how light or dark each point should be. The math required to create this lighting data is identical to that used for flat shading.

Initially, I tried producing a radial-gradient from the lighting data to use in place of the linear-gradient in my earlier attempt. The results were more realistic but multiple light sources were still a problem because layering multiple gradients on top of each other causes the underlying texture to get progressively darker. If CSS supported image compositing and blending modes (they are coming) it may have been possible to make radial gradients work.

The solution was to use a <canvas> element to programatically generate a new texture that could be used as a light map. With the calculated lighting data I could draw a series of black pixels, varying each ones alpha channel based on the amount of light that would hit the surface at that point. Finally the canvas.toDataURL() method was used to encode the image and use it in place of the linear-gradient in my first experiment. Repeating this process for each surface produces a realistic lighting effect for the entire environment.

Calculating and drawing these textures is intensive work. The basement ceiling and floor are both 1000 x 2000 pixels in size, creating a texture to cover this area isn’t practical so I only sample lights every 12 pixels, which produces a light map 12 times smaller than the surface it will cover. Setting background-size: 100% causes the browser to scale the texture up using bilinear (or similar) filtering so it fits the surface area. The scaling effect produces a result that is almost identical to a light map generated for every single pixel.

The background style rule for applying a light map and texture to a surface looks something like this:

element {

background: url("data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAACoAAAAyCAYAAAAqRkmtAAACiUlEQVRoQ9VZa0vEQAy8+/8/yPcbFVFEEREREcS/4eNSmpKmmU12t/XYD6W3beGmk8kk2a5Xq9XR5vjeHD+B47d/xjrTtdSxud3dl+d+OTmt+yvDmX4c9n8eAbtVoAeCyRRYBknMb4XRfRVyC6wEqYFK0Cj0HG4OfSr8HG56ZhR6AhoJO2u4JPxInxK4BDYCyYu9YOglk/xbsymvy8RhpjlrNEArqWRCrWlBQEsYRWC98EfAmlm/W8FoBKzWp2aT11KbZugJ6NKMzpJMFlANPJX1Wpdyjdj0NKozv9PoTjD0pRYVDb2b9VGgVtZHNKrLpuelEzb5DRhoRKel1alGox1wGfpmgFIYPbCIUa+MRiuTpdMJox7QrSdTM/bUlOFzZ7SERqM+6pbQZpqSZtq8ZhrnZkaRZoa7ZsblZjYgjgM1vmYClYOd1+LBnpRM9iQItLTFQ/0o6vLhXH9aCTTaOWnAehp1K9NZP4rUbufo2UnP9ajV82b6Tg70FudiZtKtHmrtoiOIpU9PpzD0Fwoo2n6sbZo9gJJZcwq9DGi0JpFyhjuoU7pxtSCjtXP9aDfv2gFaOoJofaKst5JJ+ukwM91kMirtyMp0a5MsOtyZicSobxMaLd3K0dZksZlt+HcZoUdsImZTY0g20PtA6OeypohFQR99MCqTDnnqA0NKp7My+ggYjerz34A+LQRUsolCrnWaNPznSqCoeyo1e/bV0T4+LV4KgCJwJAPNZI41WfV+MPzXAFDLluY2e83kqDoR2jcD6ByJhJoRrUtea31OgL4roCU9aORDWMRDIav0Fh890JR3lnz/1GVU1nsGlJX1n4UaRQkV6ZpQ+Uwm05ej0TkSycp8i2Hoo3+utUtvDhk9pwAAAABJRU5ErkJggg==") 0 0 / 100% 100%, url("texture.png") 0 0 / auto auto;

}Which produces the final lit surface:

Casting Shadows

Settling on canvas for lighting made casting shadows possible. The logic behind shadow casting turned out to be rather easy. Ordering surfaces based on their proximity to a light source allowed me to not only produce a light map for a surface but also determine if a previous surface had already been hit by the current ray of light. If it had, I could set the relevant light map pixel to be in shadow. This technique allows one image to used for both lighting and shadows.

Collisions

Collision detection uses a height map – a top down image of the level that uses colour to represent the height of objects within it. White represents the deepest and black the highest possible position the player can reach. As the player moves around the level I convert their position into 2D coordinates and use them to check the colour in the height map. If the colour is lighter than the players last position the player falls, if it’s slightly darker the player can step up or jump on to an object. If the colour is much darker the player comes to a stop – I use this for walls and fences. Currently, this image is drawn by hand but I will be looking into creating it dynamically.

What’s next?

Well, a game would be a natural next step for this project — it would be interesting to see how scalable these techniques are. In the short term, I’ve started working on a prototype CSS3 renderer for the excellent Three.js that uses these same techniques to render geometry and lights created by a real 3D engine.

This is one of the most amazing things I've ever seen. Well done!

This is completely insane. I would like to think so that im a good web developer and quite skill with JS/CSS3 and HTML5 but this is like a sci-fi movie to me. Very well done, would love to see some more live examples.

Amazing Keith. Next time I ask you for some CSS fixes I'll know what to expect.

Keith, mate, I always said you were a frickin' genius. This is the most amazing thing I have seen in a web browser, without doubt. Awesome.

Crazy and so good! Thanks to share it with us.

For the first time I now truely understand the possibilities of HTML5/css/js ... let the online gaming begin!! Can't wait to see what you do next :-)

Holly s***

I've never seen things this way before.

Who's up to recreate Duke Nukem 3D in HTML/CSS/JS ? \o/

Highly impressive dude :)

Pure awesomeness! :)

Ever thought of using traditional FPS collider approach (ellipsoid against plane tests) for collision? If you're testing against convex solids/cells, you can rule out any possible collision so long as it doesn't touch any of the given planes formed by the convex solid. The lighting is really good though.

Somehow, the mousemove detection event doesn't seem to work well on my version of Safari. Considered using a drag controller like what I did for my fps demos? My drag controller also works fine on mobile because i'm using Hammer.js api. However, having something on mobile isn't easy in terms of performance/features/memory limits. Best uses is some Cubic VR and some 3d geometry, or some simple 3d geometry in a single room.

Extremely impressive, Keith! Keep up the awesome work.

Great experiment! On the latest stable build of Chrome yet (Version 24.0.1312.56) running on Linux, your demo is fully functionnal but models are clipping while moving.

Nice job in all case, very interesting!

Unfortunately Chrome does suffer from clipping issues. I've documented this, along with issues in other browsers in a previous blog post

This is an amazing job!!!

One of the most amazing pieces of graphic and web design I have ever seen. Ground breaking. Congrats.

This is Insane ... Something I never thought possible ! Well, there is definitely no limit to coding ...

This is beautiful! Any idea how it compares performance wise with WebGL?

Amazing and insane at the same time. Never thought anything like this is possible to achieve using only HTML and CSS.

This is just ridiculously amazing. Nicely done.

This is a really amazing job, it would be even better if you use Pointer Lock :)

Pointer lock and fullscreen are implemented in the newer version of the engine

holy molly! (picks up jaw from the floor and runs circles around the table)

This is so cool! I'm sure it has a lot of limitations, but i don't stop people from proving me wrong. I'm curious to see the next steps in these new turn of events for the 'web fundamentals goes 3d gaming'.

alert(me.with.more.updates);thumbs up Keith Clark!

Hi Keith,

This is absolutely killer work! I shared this with the team here at DZone, where I run the HTML5 Zone, and we're all really impressed. [edit: email address removed].

Thanks, and keep up the awesome work, Allen

truly spectacular. I see the future in your visionary work. I can only imagine how much time and thought went it. thanks for sharing.

You sir, are a genius!

Good gravy, this is crazy! Who cares about clipping on Chrome? IT'S 3D AND LIGHTING WITH DIVS! AAAAHHH! :) Excellent Job.

This is truly awesome, many thanks for sharing your knowledge!

I'm in awe.

This is one amazing experiment and very impressive!

Complete brilliance. Thanks for this amazing exercise.

Great work. Please add also your own shadow

Awesome, just awesome.

Wow, unbelievable! I'll be interested to see where this leads, tie it in with web sockets and you've got an awesome platform for a multiplayer game.

I think need using CSS shaders for lighting. I long ago put out lighting in CSS shaders. I can re-do. My recipe was simple (normal 0 1 0, lighting like WebGL) and complex (required for some features of the matrices to transform normal). adobe.com/…/css-shaders.html

Amazing! My respect!

I hate to rain on your parade, but what will the rasterization process cost on the CSS software renderers, battery life on low end mobile devices etc...this is Flash all over again and wasteful, only the problem is already fixed and the reason for GPU solutions such as WebGL and Stage3D.

Wow. Seriously impressive.

*jawdrop* This is amazing! Thanks for sharing!

This is one of the most awesome things I have seen! Well done!

absolutely awesome! thanks for sharing :)

Big props. Brilliant.

Incredible stuff Keith!!!

My 8 year old came into the office, saw the demo and wanted to play the game.

Impressive work.

Just too good to believe. It is just unimaginable that such type of work can be created with CSS3 and HTML5. Great Work!

You-make-me-sick.

This is a fantastic piece of work. The lighting is phenomenal.

Very clever. I salute you :)